Pre-signed URLs let clients upload / download directly from object storage, without sending file bytes through your API. In doing so, you’re issuing a temporary, signed permission for a specific storage operation. If you scope that permission too broadly, keep it alive too long, or skip verification steps, you can end up with data exposure, content safety issues, or cost abuse.

This post documents a safe default and its trade-offs.

System description

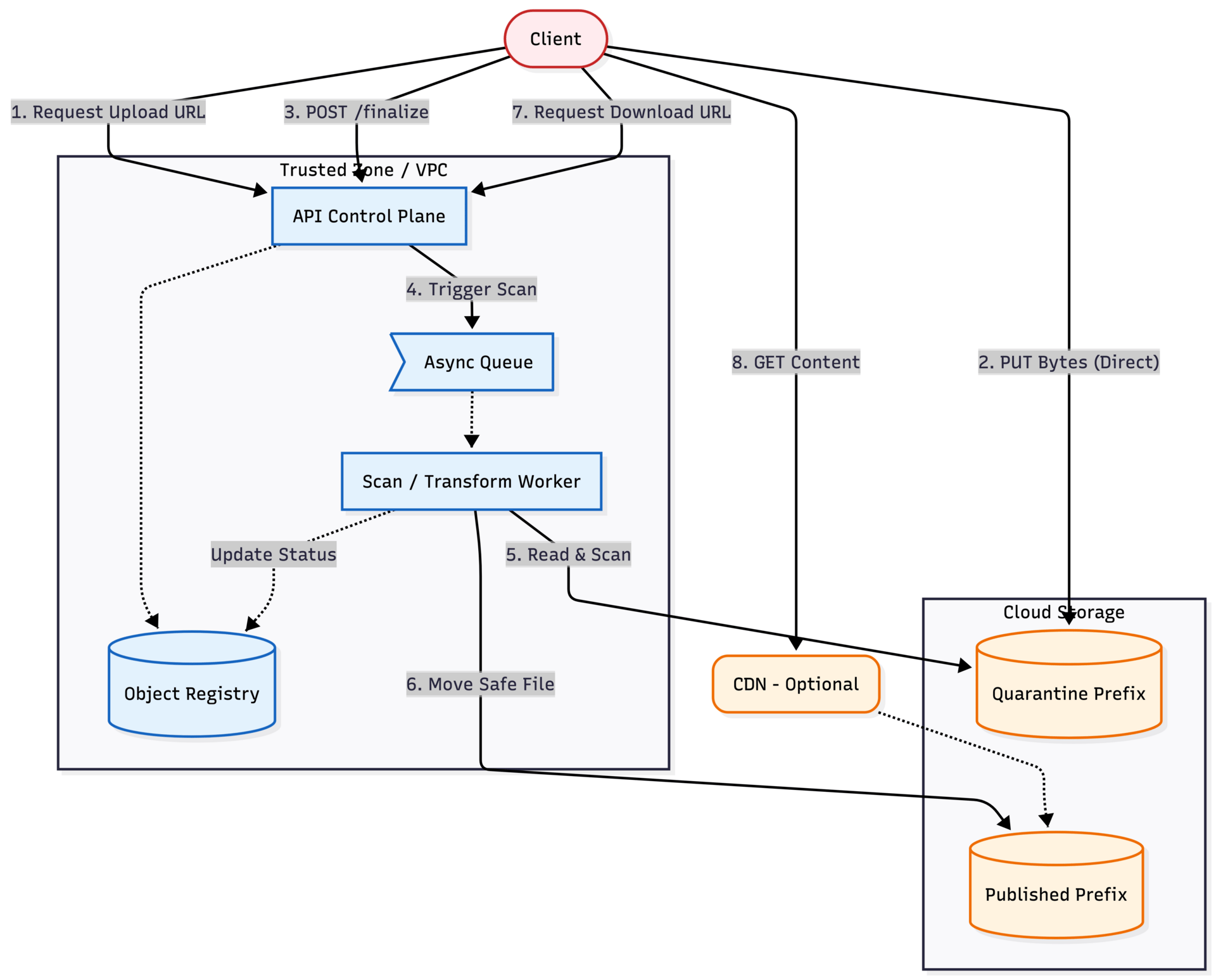

In this pattern, an API issues short-lived, exact-scope URLs for direct storage uploads / downloads. To keep the system safe, the app enforces a strict pipeline: quarantine → finalize → scan / transform → publish

Architecture choice

There are two common ways to handle file transfers, and the security trade-offs change depending on which one you pick.

Direct-to-storage (pre-signed URLs)

Your API authorizes the action and returns a short-lived signed URL. The client uploads / downloads directly to storage.

Use this when:

files can be large or frequent

you want the API to stay out of the data plane

you’re fine verifying and scanning after upload (before making the file usable)

Main risks: URL leakage, scope mistakes, weak tenant / user binding, and missing storage logs.

Proxy through an upload gateway

Your service receives bytes, applies policy inline, then writes to storage.

Use this when:

you must inspect or transform bytes before they land in durable storage

you want centralized traffic controls on the raw payload

Trade-off: the gateway becomes a bandwidth-heavy hot path and a DoS target. Inline parsing / inspection also increases attack surface.

Common middle ground: direct-to-storage for normal uploads, proxy only for high-risk classes (unknown types, archives / executables, regulated content).

Golden path

Build this first. Then relax constraints only if you have a specific reason:

Create session → server generates key → pre-sign PUT (120s) → upload to quarantine → finalize (HEAD + size + hash) → scan / transform → publish → pre-sign GET (60–300s)Each step is a checkpoint: auth, upload, finalize, publish, download.

Related patterns:

If pre-signed URLs are part of a larger sharing product, see Multi-Tenant File Sharing: Secure Control Plane Architecture for object registry, tenant isolation, and share-link policy.

If uploads trigger customer callbacks, see Secure Webhook Delivery: Signing, Verification, and SSRF Prevention.

If finalize calls are retried by clients or workers, see Designing API Idempotency Keys to Prevent Duplicate Writes.

Minimal system context

API (control plane): authenticates user, creates upload session, generates pre-signed URLs, enforces finalize / download issuance

Object storage (data plane): receives PUT / GET directly from client using signed URLs

Metadata store (object registry): maps

upload_id/object_id→ tenant / user → state (created / uploaded / finalized / published)Scanner / transform worker: pulls from quarantine, scans / transforms, writes to published prefix

Optional CDN: serves published objects with safe headers (never caches signed URLs)

Minimal API shape

POST /uploads→ returns{ upload_id, object_id, put_url }POST /uploads/{upload_id}/finalizeGET /objects/{object_id}/download-url→ returns{ get_url }

Clients should never send bucket names or object keys. They should only deal in upload_id and object_id.

Security properties of pre-signed URLs

Pre-signing binds:

the HTTP method (PUT vs GET)

the exact bucket + object key

the expiration (TTL)

It does not guarantee:

file type safety (clients can lie; files can be polyglots)

safe content (HTML / SVG / scriptable formats)

perfect size enforcement for PUT in all setups

That’s why the flow uses quarantine + finalize, and often scan / transform before publish.

Threat model

Baseline assumptions

The storage bucket is private (no anonymous / public reads)

Clients are untrusted: they can retry, replay, and lie about metadata (filename, MIME type, content)

Control plane authority: Your API can derive tenant / user context from the auth token (not the request body)

Objects are treated as untrusted until finalize succeeds; only “published” objects are eligible for end-user download

Standard infra controls such as TLS, WAF, database AuthN, SQLi prevention, etc. are assumed to be in place. This model focuses on the file transfer pattern

A note on risk: you won’t fix everything

This table isn’t a checklist where every row must be fully eliminated. Focus on preventing the worst failures and limiting blast radius. In practice: ship prevention for the High rows first, then add monitoring and response for what you can’t realistically prevent.

Phase 1: Intake (Pre-signing & Uploads)

Focus: Preventing unauthorized writes and ensuring tenant isolation

Asset | Threat | Baseline Controls | Mitigation Options | Risk |

|---|---|---|---|---|

Upload URL | PUT reuse: Attacker replays URL to overwrite content or drive up costs | Exact-key pre-sign | 1. One-shot keys: Generate unique key per session 2. Rotation: Detect multiple PUTs and invalidate / alert 3. TTL: Keep upload window short (e.g., < 15 mins) | Low |

Capacity | Oversized Uploads: 5TB file in 10MB slot (Cost / DoS) | Auth required | 1. Embed policy: Add 2. Quotas: Rate limit calls to | Low |

Tenant data | Weak binding (IDOR): Attacker uploads file to another user's ID | Auth context | 1. Trusted context: Derive tenant only from auth token 2. Session binding: Bind | High |

Object namespace | Client-side key gen: Client supplies bucket / key path, enabling overwrites of other objects | Server-generated keys | 1. Opaque IDs: Client only uses 2. Validation: Reject any client-supplied path parameters | High |

IAM role | Blast radius: Signing principal has | Scoped role | 1. Least privilege: Restrict signer role to specific 2. Env separation: Separate roles for dev / prod | Medium |

Pre-sign scope | Scope creep: Wildcards or broad prefixes allow overwriting unintended objects | Exact-key signing | 1. Block wildcards: Sign only specific full path uuid / file 2. Allowlist: Assert keys match specific patterns in code | Medium |

Phase 2: Processing (Storage & Workers)

Focus: Preventing malicious content from crashing the pipeline

Asset | Threat | Baseline Controls | Mitigation Options | Risk |

|---|---|---|---|---|

Stored content | The swap (TOCTOU): Client claims benign file type but uploads malware / executable | Quarantine prefix | 1. Finalize gate: Verify size & headers match metadata before marking valid 2. Checksums: Store / verify content-MD5 or SHA256 if supported 3. Scan: Scan / transform in | High |

App state | Bypassed finalize: App assumes upload is valid just because URL was issued | Async architecture | 1. State gate: Require explicit 2. Isolation: Ensure | High |

Data at rest | Unencrypted storage: Buckets lack encryption or KMS policy is too broad | Provider defaults | 1. Enforce encryption: Mandate SSE-S3 or SSE-KMS 2. Key policy: Tighten KMS decrypt permissions to smallest principal set 3. Drift detection: Alert on policy changes | Low |

Processing pipeline | Poison pill: Malformed file crashes parser / worker (RCE / DoS) | Standard containers | 1. Sandbox: Run workers in ephemeral sandboxes (Firecracker / gVisor) 2. Resource limits: Set strict timeouts and memory caps 3. Network: Restrict worker egress | Low |

Storage capacity | Zombie data: Abandoned uploads (never finalized) accumulate indefinitely, increasing costs | Manual cleanup | 1. Auto-expiry: Configure S3 lifecycle rule to delete objects in 2. Cron: Prune DB sessions where | Low |

Phase 3: Serving (Downloads)

Focus: Preventing the file from attacking the user

Asset | Threat | Baseline Controls | Mitigation Options | Risk |

|---|---|---|---|---|

User browser | Stored XSS: Inline rendering of HTML / SVG payload executes script | Published-only served | 1. Headers: Force 2. Transcoding: Only allow inline rendering for safe / transformed formats (e.g., re-encoded JPEGs) 3. Sandbox domain: Serve inline content from a separate domain (e.g., | High |

Download URL | URL Leakage: Logs / Referrers expose signed URL | AuthZ required | 1. No logging: Redact signed query params from all server logs 2. Short TTL: Enforce max TTL (e.g., 5 mins) 3. Alerting: Alert on download spikes | Low |

Auditability | Silent exfil: Access bypasses API logs (direct S3 access) | API logging | 1. Storage logs: Enable S3 / GCS access logs and centralize them 2. Traceability: Tag objects with | Low |

If you proxy instead

If you move uploads / downloads through a gateway, URL leakage and TTL / scope issues shrink because you may not issue signed URLs. But you take on new primary risks:

DoS / cost (your gateway moves bytes)

availability (hot path)

parsing / inspection attack surface (if you inspect content inline)

operational complexity (backpressure, retries, global scaling, slow clients)

Proxying is not inherently more secure. It just shifts the trade-offs.

Verification checklist

Identity and mapping

Client cannot provide bucket / key (only

upload_id/object_id)Tenant / user derived from auth context and enforced on finalize + download-url issuance

Cross-tenant access attempts return 404 (Not Found), not 403 (Forbidden)

Pre-sign correctness

PUT TTL default 120s, GET TTL 60–300s, and max TTL enforced

Exact-key pre-sign only (no prefix / wildcards)

Pre-signed URLs are not logged anywhere; signed query params are redacted

Quarantine → finalize → publish

Objects are unusable until finalize succeeds

Finalize verifies existence and size bounds; checksum / hash stored when applicable

Magic bytes / MIME-type match verified (if inspecting)

Abandoned sessions expire; quarantine cleanup runs

Serving safety

Downloads default to attachment +

nosniffInline rendering only happens through a safe transform pipeline

Detection

Object-level access logs enabled and centralized

Alerts exist for unusual download patterns and destructive actions (delete spikes)

Implementation & Review

The full threat model matrix, architectural diagrams, and a printable verification checklist for this pattern are available in the Secure Patterns repository. Use these artifacts to guide your design reviews and internal audits.